Hi, I am Gregor Hohpe, co-author of the book Enterprise Integration Patterns. I work on and write about asynchronous messaging systems, distributed architectures, and all sorts of enterprise

computing and architecture topics.

Hi, I am Gregor Hohpe, co-author of the book Enterprise Integration Patterns. I work on and write about asynchronous messaging systems, distributed architectures, and all sorts of enterprise

computing and architecture topics.

Find my posts on IT strategy, enterprise architecture, and digital transformation at ArchitectElevator.com.

Most of you have probably noticed that I rarely blog about anything related to Google.

That's partly because a lot of the information is confidential and partly because

a lot of it is frankly not that interesting (yes, even Google has boring projects).

Luckily, an increasing number of Google products feature public APIs, and these are,

well, public, so I can talk about them freely without risking being fired. In fact,

the Google developer-focused products have reached enough critical mass to convert

last year's geo developer event into a full fledged Developer Day. Sort of like Microsoft's PDC or Sun's JavaOne but Googley. This funny article highlights the major differences between a Google developer event and a Microsoft

developer event so I don't have to.

Most of you have probably noticed that I rarely blog about anything related to Google.

That's partly because a lot of the information is confidential and partly because

a lot of it is frankly not that interesting (yes, even Google has boring projects).

Luckily, an increasing number of Google products feature public APIs, and these are,

well, public, so I can talk about them freely without risking being fired. In fact,

the Google developer-focused products have reached enough critical mass to convert

last year's geo developer event into a full fledged Developer Day. Sort of like Microsoft's PDC or Sun's JavaOne but Googley. This funny article highlights the major differences between a Google developer event and a Microsoft

developer event so I don't have to.

Developer Day took place in 10 cities around the world. Somehow I found out about the whole gig way to late and failed to put myself in for a speaking gig in Tokyo or Sao Paulo. Hopefully next year. This time I made do with attending the local event in San Jose, together with about 1500 attendees who came to find out more about the latest Google products, see cool demos, interact with the product teams and grab a free snack or two. Oh, btw, part of being Googley means being free so attending the event was free of charge. The first impression was quite positive. First off, the wireless did not suck (unlike JavaOne), there were free snacks, Lego tables, bean bags, and gigantic lava lamps. The wireless was in fact good enough to post a bunch of pictures to Flickr (hey, being interoperable is Googley). The images are tagged with "GDD07", so give it a try.

Jeff Huber kicked off with the keynote. Apparently Google has become the biggest independent

network with over ½ billion unique visitors in a month. So we better listen up. Jeff

gave a brief overview over the history of Google APIs: the Search API had its debut

in 2002 and things got really interesting in 2005 when developers started to reverse

engineer the Maps API. Today over 50,000 Web sites use the Google Maps API. The biggest

deal in Google's API basket these days is GData. GData is the foundation for many product APIs such as Calendar, Spreadsheets, Base.

Of course, it is in Beta, but that just makes it more exciting.

Jeff Huber kicked off with the keynote. Apparently Google has become the biggest independent

network with over ½ billion unique visitors in a month. So we better listen up. Jeff

gave a brief overview over the history of Google APIs: the Search API had its debut

in 2002 and things got really interesting in 2005 when developers started to reverse

engineer the Maps API. Today over 50,000 Web sites use the Google Maps API. The biggest

deal in Google's API basket these days is GData. GData is the foundation for many product APIs such as Calendar, Spreadsheets, Base.

Of course, it is in Beta, but that just makes it more exciting.

Google Mashup Editor

Of course Google wouldn't go through all this effort and expense if it didn't have any new products to announce. These days you are totally not cool if you haven't built a mash-up. Come on, do you still live in World 1.0? To make being cool a bit easier Google announced the Mashup editor, an editing environment that pull different feeds together and outputs HTML pages, which include lists, maps, and other elements (Yahoo Pipes more or less targets the same niche). While this is a cool tool I was hoping that the guy on stage would have actually hand-coded something, no matter how small or insignificant. I understand that keynotes are always rushed for time and you don't want to take any chances. But when it comes to developer tools, actually developing something is unbeatable. And after all we hire only the smartest and brightest, so I should not be asking too much.

Google Gears

The other big news is Google Gears. We all love Google tools and applications, but they have one big limitation: you have to be connected to the Internet to use them. If you live outside of Mountain View (which Google blanketed with free WiFi access) that might not always be the case. That's where Google Gears comes in. Gears caches Web pages (or data) locally and also gives browser applications access to a local database. The ability to run off-line makes any RIA (Rich Internet Application) even richer. But before you convert all your apps, keep in mind that Gears is an experimental release, i.e. not recommended for production environments.

Maplets

The Maps team did not want to be left behind and announced Maplets. These mini applications allow you to plot data from different maplets onto the same map. For example, you can plot hotel prices (from Orbitz) and weather data (from weatherbug) onto the same map to find the best deal on a hotel outside San Francisco's fog belt.

Gadgets

The keynote also reminded us how much traffic some of the Google Gadgets get. Not surprisingly, a game is near the top: PacMan got 6.7 million views a months while Notes is a strong contender with 4.7 million views. Think about the exposure (i.e. fame) and the potential AdSense Dollars (i.e. money). The keynote demo'd a cool gadget: the Expedia fare calendar. Given a source and destination it plots the lowest fares on a calendar-like widget. Maybe we are really entering the age of the widget economy.

Sergey

Sergey Brin made a "surprise" appearance. Sergey mused how the Internet can help create developers by supplying dating services, which can lead to human offspring, who then can help develop more apps. A Matrix-like vision of a self-hosted Internet I guess.

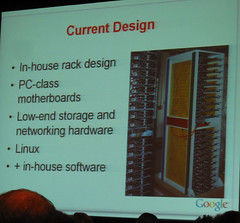

Jeff Dean, a Google fellow, shared details about Google's core infrastructure. While

some of the key components have been described in public papers (e.g. GFS, MapReduce,

BigTable) this talk promised to give us a bit more insight into what is really going

on in the Google data centers. Amazingly, Jeff shared quite a bit of detail, e.g.

real pictures of Google server racks (I have not been able to find one for my public

talks) and hard numbers.

Jeff Dean, a Google fellow, shared details about Google's core infrastructure. While

some of the key components have been described in public papers (e.g. GFS, MapReduce,

BigTable) this talk promised to give us a bit more insight into what is really going

on in the Google data centers. Amazingly, Jeff shared quite a bit of detail, e.g.

real pictures of Google server racks (I have not been able to find one for my public

talks) and hard numbers.

Jeff reminded everyone that increasing amounts of data (his back of the napkin computation suggested we are dealing with 5 Exabytes or so per year) and smarter (i.e. computationally more intensive) algorithms cause a constant need for more computing power. Google's strategy is to optimize the price/performance ratio of its computing resources. As a result, Google data centers are not equipped with the latest high-end servers but rather with lots and lots of PC-class machines. Making the machines themselves more resilient, for example by equipping them with redundant power supplies typically does not pay off. The large number of machines make failures a question of when rather than if, so your software infrastructure has to be prepared to deal with it in any case. Once you have that infrastructure, the price increment to bring an individual machine from four 9's to five 9's availability is not warranted. You rather put the extra money into more machines, which are available most of the time.

Jeff ran through a quick history of Google data centers, starting with the infamous "cork board" machines, named after the cork board that separated the motherboard from the metallic tray. However primitive, this setup allowed 4 motherboards to be fitted on a single tray (and a single power supply). Subsequent generations of server racks have more computing power, cleaner cabling, but maintain the spirit of high-density, low-cost machines.

But this massive computing infrastructure comes to life only in combination with an appropriate software stack. Running programs on this many machines requires dealing with failing machines, huge amounts of data storage, back-ups, restart points etc. To make developing highly parallelized applications easier Google developed three pieces of core infrastructure:

All these technologies are described in public papers: http://labs.google.com/papers.

I attended two sessions on GData. GData forms the basis for a number of new product APIs, such as Base, Blogger, Calendar,

Notebook, Spreadsheets, and Picasa Web. GData follows a relative simple data model.

Requests are made REST style with descriptive URL's and query strings (plus HTTP headers

and/or session cookies for authentication). Response data is in Atom format, with

Google specific extensions (for example

I attended two sessions on GData. GData forms the basis for a number of new product APIs, such as Base, Blogger, Calendar,

Notebook, Spreadsheets, and Picasa Web. GData follows a relative simple data model.

Requests are made REST style with descriptive URL's and query strings (plus HTTP headers

and/or session cookies for authentication). Response data is in Atom format, with

Google specific extensions (for example gd:when and gd:where for calendar events). GData provides a consistent query mechanism, authentication,

and off-line concurrency management. Concurrency is optimistic, i.e resources are

not locked, making concurrent updates possible. Conflicts (e.g., updates to stale

data) can be detected and result in a 409 error because resource links contain unique

version identifiers.

Using these APIs someone developed a cool ambient clock gadget that shows busy and free times on an analog dial. Amazingly, the developer was right in the audience. The presenter left ample time for Q&A, which was very much appreciated by the audience. Fielding audience questions as a Google engineer is always a tricky situation because topics can be controversial or touch on confidential issues. Jeff did a good job answering questions without having to resort to the "I am not privileged to comment on such matter" too often.

Naturally the SOAP Search API question (no new developer keys are being issued) came up. How committed is Google to keep the new API's around, especially given that they are free (and free of ads)? Jeff reminded us that he is not in charge of these decisions. According to him, Google is committed to continued support for the GData APIs because they give the user access to their own data, i.e. their appointments or photos. One of Google's maxims it to not lock users in, i.e. to allow them to grab their data at any time and take it somewhere else. The APIs play a key part in that statement and are here to stay. Google also derives value because they can observe what the developer community builds on top of their platform.

Jeff clarified that there are currently no SLA's in place for GData API's. On the upside Google is pretty good at running high-availability, low-latency services. Product comparisons can be treacherous territory but it is clear that Google's Mash-up editor and Yahoo Pipes are playing in similar territory. In fact, you can combine the two by using Pipes against GData feeds because they both use popular Arom and RSS formats.

There was some unhappiness about the way the AdWords API is being evolved. AdWords supports four concurrent versions of the API, causing an API to be decommissioned 4 months after a new version is announced. The new set of Google APIs seem more amenable to evolution so hopefully they will provide a smoother transition for developers, even though they will continue to evolve.

In between the sessions, attendees networked over lunch or in the blogger lounge. The day concluded with a social event on Google campus. Sadly one could not obtain an alcoholic beverage without a wristband, which was being issued on the other side of campus. There are some things even Google can't touch.