Hi, I am Gregor Hohpe, co-author of the book Enterprise Integration Patterns. I work on and write about asynchronous messaging systems, distributed architectures, and all sorts of enterprise

computing and architecture topics.

Hi, I am Gregor Hohpe, co-author of the book Enterprise Integration Patterns. I work on and write about asynchronous messaging systems, distributed architectures, and all sorts of enterprise

computing and architecture topics.

Find my posts on IT strategy, enterprise architecture, and digital transformation at ArchitectElevator.com.

DSLs (Domain Specific Languages) are a hot topics these days. Integration is also hot, despite the acronym du jour having evolved from EAI to SOA to EDA to SOA 2.0. When pondering the value of buzzword compliant architectures it struck me that a decade ago I created an EAI DSL! And I had almost forgotten.

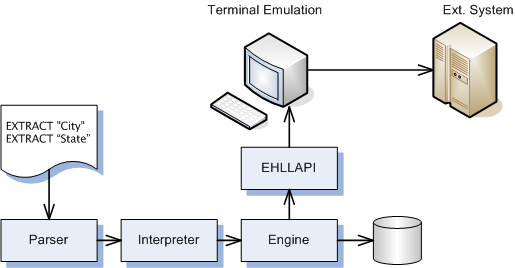

When I worked for AMS, my very first programming assignment was to connect from one

state agency to another one to retrieve data. Classic integration! Now these were

the days of C and PowerBuilder, and I used a magic tool called EHLLAPI. This tool allows a program to simulate a user accessing another other system via

a 3270 terminal. EHLLAPI actually stands for Extended High Level Language Application

Programming Interface, which is a bit of a euphemism. Essentially, the API provides

a single method called hllc. By passing variations of the ominous parameters to this method one can instruct

the interface to perform various functions, such as emulating the user hitting a key

or extracting characters from the 80 by 25 screen.

EHLLAPI is the classic incarnation of Presentation Integration, more commonly referred to as "screen scraping". Screen scraping is widely considered the most “uncool” of all integration technologies since it is clunky and lacks cool buzzwords -- that is, until HTML became mainstream, at which point screen scraping was suddenly elevated to an art form and involved the much needed buzz to be taken seriously. Even I could not resist at the time and created InfoGate. Of course, now that we live in a 2.0 world, mashups have surpassed Web screen scraping.

Anyway, I think every project is only as cool as you make it and I thought this was a pretty cool one. The undeniable strength of screen scraping is that you can obtain automated access to external systems without requiring any changes to that system. Often, you can even accomplish it without the owner of the other system knowing about it. In our example, all we needed was a simple user ID and password. Of course, it would have been easier to just get a data feed from the other agency but that would have required a lot of coordination and a pile of paperwork. The going joke was always whether the other agency would notice that they had a very productive user who would log in and 6 am sharp and would never take a break.

Looking back at my old code nostalgia struck me. What kind of code did I write in 1994?

/*--------------------------------------------------------------------- * PROGRAM: script.c * * AUTHOR(s): Gregor Hohpe * * DATE: 11/08/94 * * DESCRIPTION: * * The external agency interface script compiler. *---------------------------------------------------------------------*/

Well, it doesn't have any unit tests, but at least it is well documented. Maybe the writing was on the wall that I wanted to write about integration some day!

I recall that in the beginning writing this software was no fun. The API provides a single method

void hllc (int *func, char *data, int *len, int *rc)

Aaaah... the long gone days of APIs with an int *function parameter. Isn't it great to have an interface that never has to change? Hey, why

not just pass a void *? someone passes in the wrong data structure, too bad. That's what protection faults

are for. Interestingly, TheServerSide just posted the video for my Where did All My Beautiful Code Go? keynote at the TheServerSide Java Symposium. Well, I can attest that it did not go

here!

To make matters worse, the biggest weakness of screen scraping is that it is inherently

brittle. As soon as the user interface changes, chances are that the integration is

broken. Soon I figured out that if I did not want to spend the rest of my passing

parameters to hllc I better come up with an alternative.

This alternative came in form of a domain language. I did not so much have the creation of a real language on my mind, but having left school recently the Dragon Book left me with a certain fascinated for compilers. So I set out to implement the core screen scraping functions once in a generic engine and then drive the application through a series of scripts, one for each screen to be scraped. Each script would specify where on the screen the information could be found and would specify the name of database column that should receive this data element. If the screen layout ever changed, all I would have to do was edit the script.

Armed with a general idea on how to build a tokenizer, parser, and interpreter I set out to define a scripting language that would suit my needs. As I hand rolled the all parts of the system, I kept the syntax very simple.

;---- scrape XYZ screen ----

LABEL SCREEN_ABC

FIND "ADDRESS"

EXTRACT "Street"

POSITION 653

EXTRACT "City"

EXTRACT "State"

EXTRACT "ZIP"

FIND "INACT DT"

EXTRACT "InactiveDate"

NEXT

EXTRACT "Inactive"

This script could be invoked from the code by its label SCREEN_ABC. It would look at the current screen and find the label ADDRESS. It would then extract the next field (3270 screens are organized by input fields),

write it into the database column Street, and advance to the next field on the screen. Other commands could skip a field (NEXT), or go to an absolute position on the screen (POSITION) if necessary. The syntax is trivial: each script line starts with a command or a

comment indicator. Data types are limited to strings and integers. One might laugh

at such a simple language syntax but it definitely did the trick and it only took

less than a day to code up the parser.

So how does this language stack up? It has a very simple syntax, but encompasses all required commands to extract data from a 3270 screen and stick it into a database. One thing the script language cannot do is navigate from screen to screen. It turned out that logging on and navigating through menus required quite a bit of logic that would require control constructs and expressions -- definitely outside of what my little parser could handle. So the system used "C" for navigation and the domain language for scraping. Here are my little lessons I remember (given that I did this 12 years ago):

if statements makes the language less useful. Bogus. If you can define the syntax in

a few sentences or, better yet, by showing a few example lines, you are in good shape.

I think integration is a great area for the creation of domain languages. For once, integration deals with different programming models, and we already find (for better or worse) a number of domain languages, such as for transformations (XSL) or orchestrations (BPEL). But these languages are still pretty generic and often difficult to deal with. Creating simpler, more purpose-built languages could help make integrations a little easier.

Ads by Giggle

Interface21 - the honest consultants. If we make a mess, we clean it up. Promised!

Interface21 - the honest consultants. If we make a mess, we clean it up. Promised!